SDXL

Dual text encoders, multi-aspect training, two-stage refinement. ICLR '24 Spotlight, 4,000+ citations. One of the most widely adopted open-source generation models.

Co-founder at Black Forest Labs. Lead author of SDXL. Creator of Kontext. Core contributor to the FLUX model family. Self-taught, 12+ production models, and a pattern of finding the highest-leverage research direction in the room and executing it into something real.

Generative AI researcher and engineer who consistently identifies high-leverage research directions and pushes them from concept through to shipped production systems.

Co-founded Black Forest Labs, which raised a $300M Series B at a $3.25B valuation. Lead-authored SDXL, one of the most widely adopted open-source image generation models. Created Kontext, designing in-context image editing with a two-person core R&D team over roughly two months. Core contributor across the FLUX.1 and FLUX.2 model lines.

His work spans diffusion and flow-matching systems, transformer architectures (MM-DiT, DiT), large-scale distributed training, data curation, representation learning, and multi-modal model design. The common thread is leverage — finding the few design decisions that change the trajectory of a model line, then executing them quickly.

Came into machine learning without a formal degree, teaching himself deep learning and generative modeling through direct implementation and open-source work. Before that, seven years across visual effects, media production, and software development — which partly explains the strong instinct for both aesthetic quality and practical execution speed.

Dual text encoders, multi-aspect training, two-stage refinement. ICLR '24 Spotlight, 4,000+ citations. One of the most widely adopted open-source generation models.

Cosine-scheduled noise scaling and v-prediction for sharper color accuracy. A small, precise intervention with outsized visual impact.

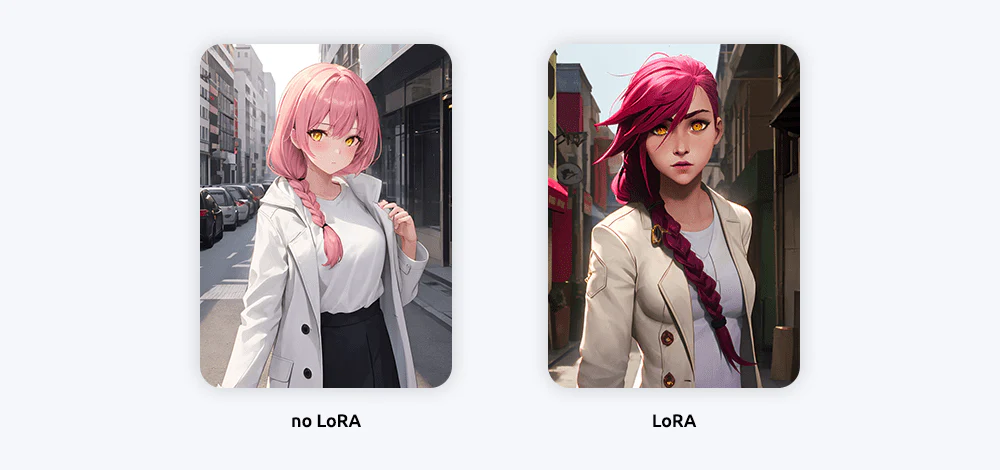

Depth, canny, and pose conditioning as lightweight LoRA adapters. Made fine-grained control practical and accessible.

Extended latent diffusion into the temporal domain for video generation.

Next-generation architecture work around rectified flow transformers. ICML '24 Best Paper. Directly informed the Flux MM-DiT design.

12B-parameter MM-DiT built from scratch. Rectified flow matching, 3D RoPE, guidance distillation. Shipped dev, schnell, and pro in four months.

Continued iteration on Flux quality, behavior, and deployment characteristics.

Ecosystem-level product work around the Flux model family — tooling, not just flagships.

Ultra-high-quality production model. The premium end of the Flux line.

In-context image editing via sequence concatenation on a 12B Flux transformer. Core R&D: 2 people, ~2 months. Introduced KontextBench.

Native multi-reference and editing in pretraining. Massively scaled paired data. Extended the Kontext line into the model stack itself.

Efficient small-scale inference model. Rounds out the family from the deployment end.

Seven years across VFX, media production, and software. Built technical instincts and rapid-learning habits in complex production environments.

Pivoted into self-directed ML research. Taught himself the full stack from scratch without a degree, driven by conviction that generative models were the frontier worth investing in.

Joined Stability AI, quickly became lead author of SDXL, and was promoted to Applied Model Lead within eight months. Shipped multiple production models across image and video.

Co-founded Black Forest Labs. Led Kontext, contributed to the FLUX model family, and continues pushing multi-modal research at the frontier.